How I Designed a 0→1 Civic Platform Used by 500+ Elected Officials in just 45 Days

Role: Lead Product Designer

Company: GoodParty.org

Year: 2025 - 2026

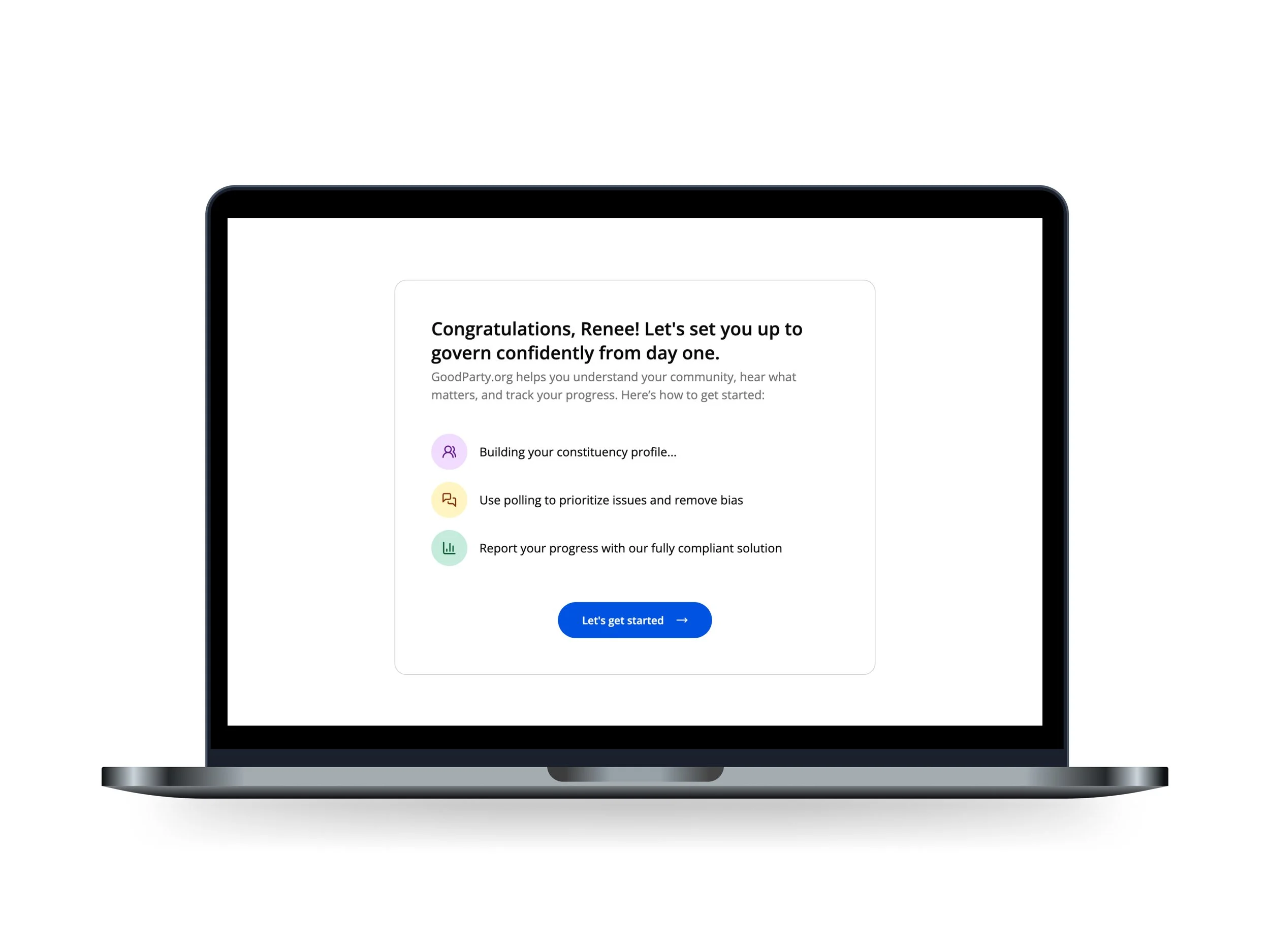

Full Prototype: Serve Master

To access: Click Rene Wells in the bottom left. Click prototype settings, archives, then click Onboarding v1.

TLDR: Impact

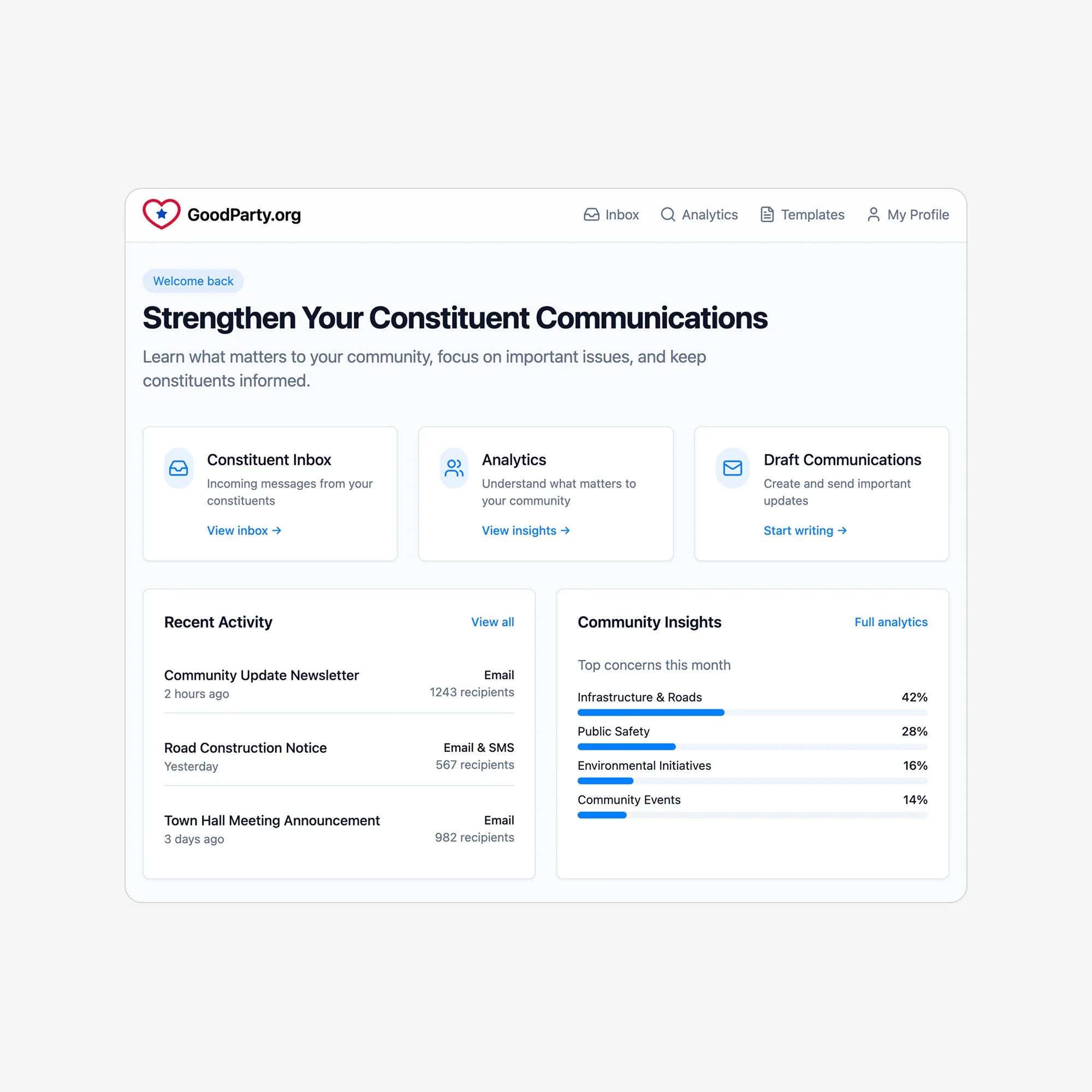

Built the first CRM and polling platform that helps non-partisan elected officials govern with representative data, not the loudest voices.

500+ elected officials onboarded within 45 days of soft launch

47% expanded beyond free allocation, validating demand for representative constituent data

100% activation via free starter poll

<6 minutes to launch first poll; <3 minutes to expand

The Problem

Independent elected officials were making policy decisions in the dark.

Feedback came from town halls, emails, and social media—channels dominated by vocal community members that don't represent the broader constituency. Officials couldn't tell whether an issue reflected real community sentiment or just who showed up and yelled the loudest.

Across interviews with newly elected officials, consistent signals emerged:

Most were understaffed, new to office, and balancing governance with their day jobs

Constituent data lived in fragmented tools (Excel, Facebook, email, paper notes)

There was no credible way to learn about or validate priorities

My Role

I led discovery, design, validation, and early execution of Serve.

Once we validated our direction, the team grew to 5, and I partnered with:

CPO: I owned research, product recommendations, and design; he owned go-to-market

AI engineer: I defined clustering requirements based on user research. We iterated through 4 versions until officials found the outputs clear and actionable

Engineering (2 full-stack): When engineering proposed investing in automation early on, I advocated for a phased approach—demonstrating through pilot data that manual operations would de-risk significant engineering investment

The Constraints

No existing category: There are no specific tools built for independent elected officials.

High ambiguity: We had an ambitious goal but no roadmap for how to get there.

Broken government infrastructure: Voter files and constituent lists are fragmented, outdated, or undocumented—especially in smaller districts. We had to build around unreliable data sources, not integrate with clean ones.

Immovable deadline: Soft launch had to happen before Election Day so newly elected officials could benefit immediately. Missing this window meant waiting another cycle—compressing discovery, validation, and build into 6 months.

Approach

I established a standing panel of 10 elected officials (councils, school boards, mayors) representing our ICP across office types and constituency sizes.

We ran one discovery phase and five prototype rounds, updating continuously based on feedback.

Core learnings:

Officials universally struggled to distinguish vocal minorities from true sentiment

Outbound communication was harder—and far more valuable—than inbound

Legal constraints (open records, Brown Act) needed to shape the product outputs

What officials wanted most was confidence that they were prioritizing the right issues, not just more data

Quotes

"I have no problem hearing from the angry mob. I want to hear from everyone else."

— Cara Schulz

"When we're at these meetings, we maybe get one or two residents at a meeting and usually they're mad. In terms of what's affecting the constituency at large, there's not been a time where the village administrators said, 'we should try and hear from everyone in the village.'"

— Joshawa Stell

"I’ve been asking for feedback on social media. Therefore, I only get voices on social media."

— Dennis Hennen

You can check out the entire panel and deck here.

Pivot

I drove a strategic pivot that reshaped the product direction from early on: inbound collection → outbound, representative polling.

Early prototypes showed strong engagement with inbound submission. But research revealed the deeper problem: officials didn't know what their constituents cared about in the first place. Inbound would generate more noise without solving the underlying issue, leaving officials reactive rather than proactive.

I built the case for the pivot through three actions:

Synthesized research evidence: Across 10 design partner interviews, I identified that officials couldn't distinguish signal from noise—the root problem inbound wouldn't solve

Secured buy-in: Brought the recommendation to the CPO with supporting research. He agreed, and I had autonomy to define how we'd validate it

De-risked the shift: Proposed the phased manual approach to validate polling before committing engineering resources

What we learned

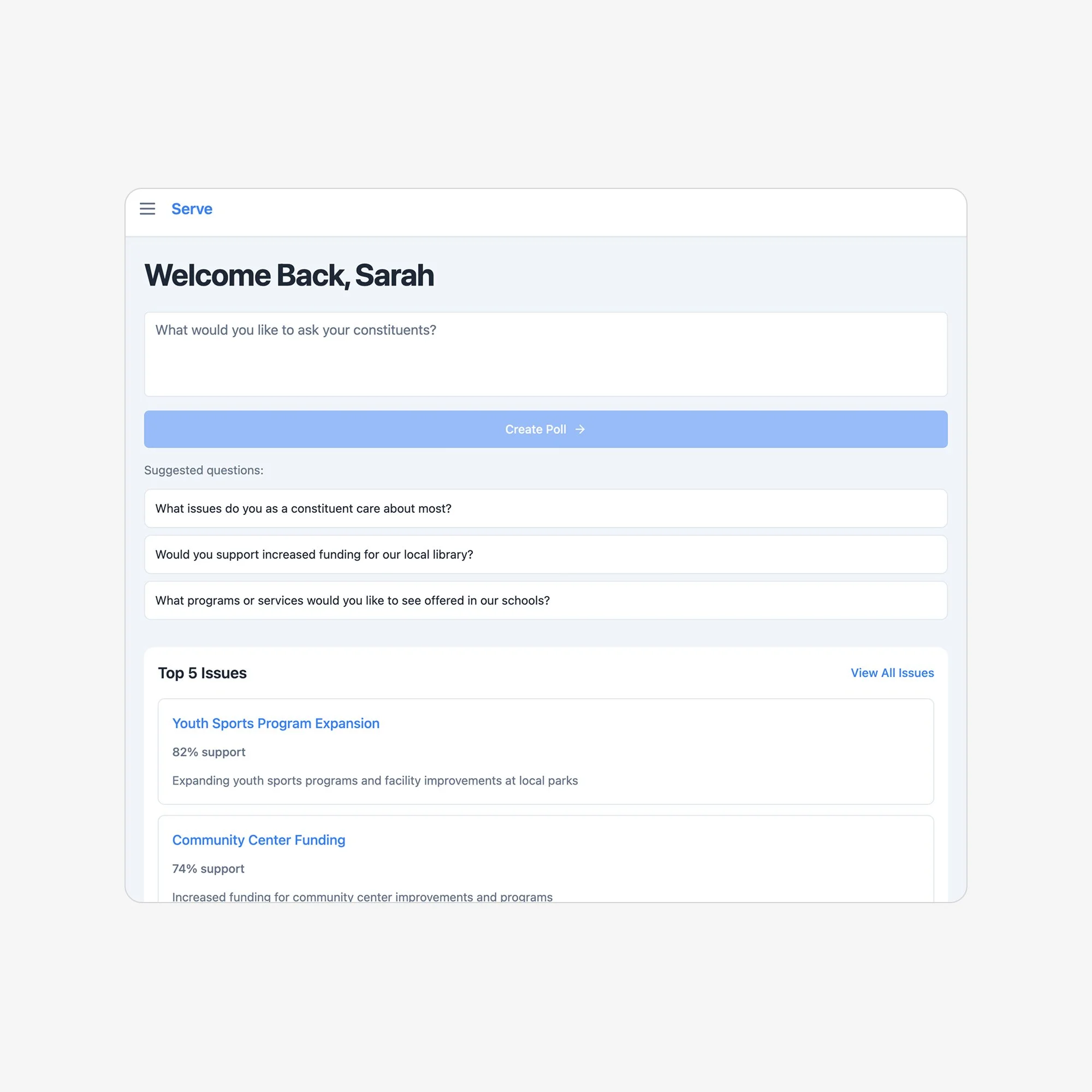

Polling would give officials something inbound never could: statistical confidence, credibility, and clarity.

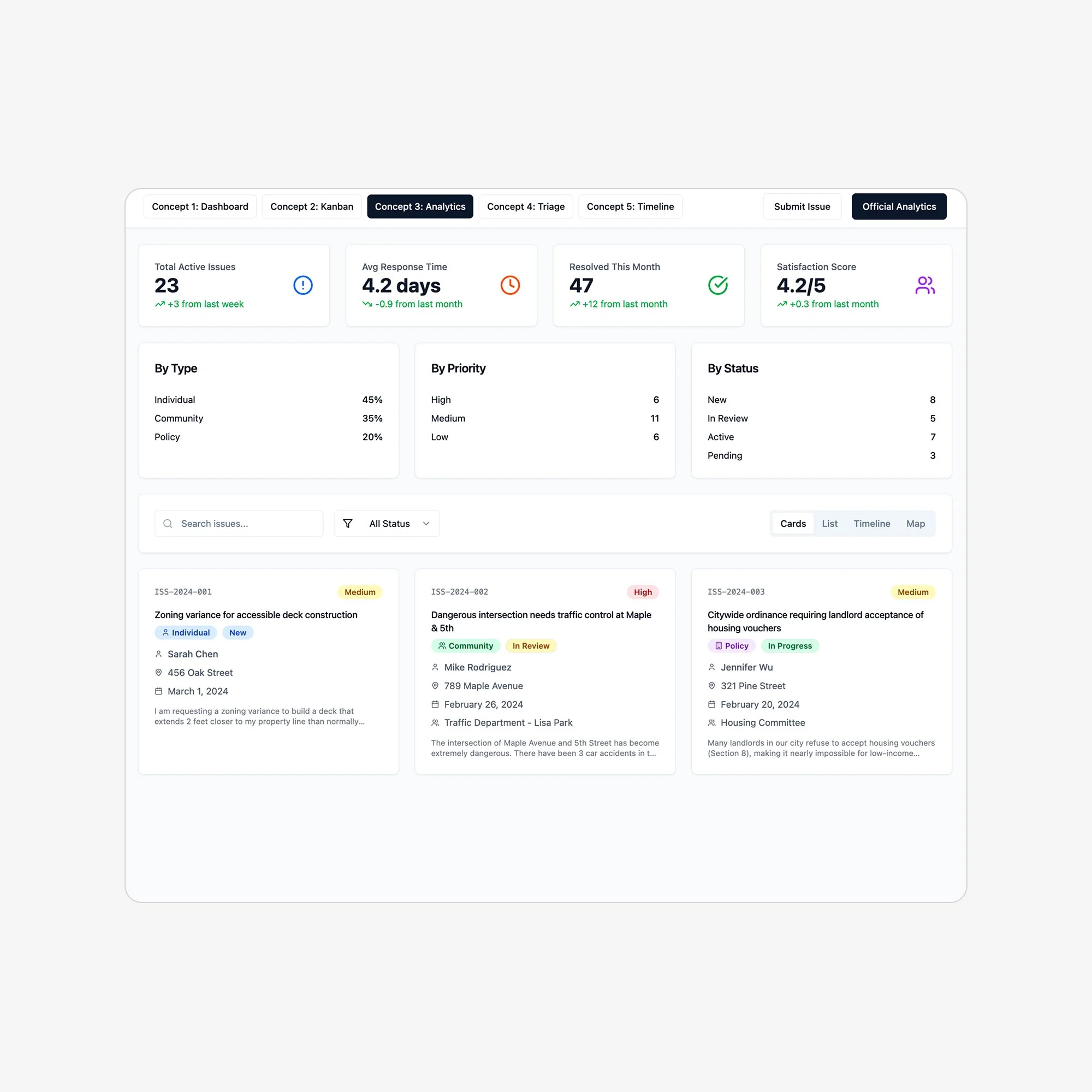

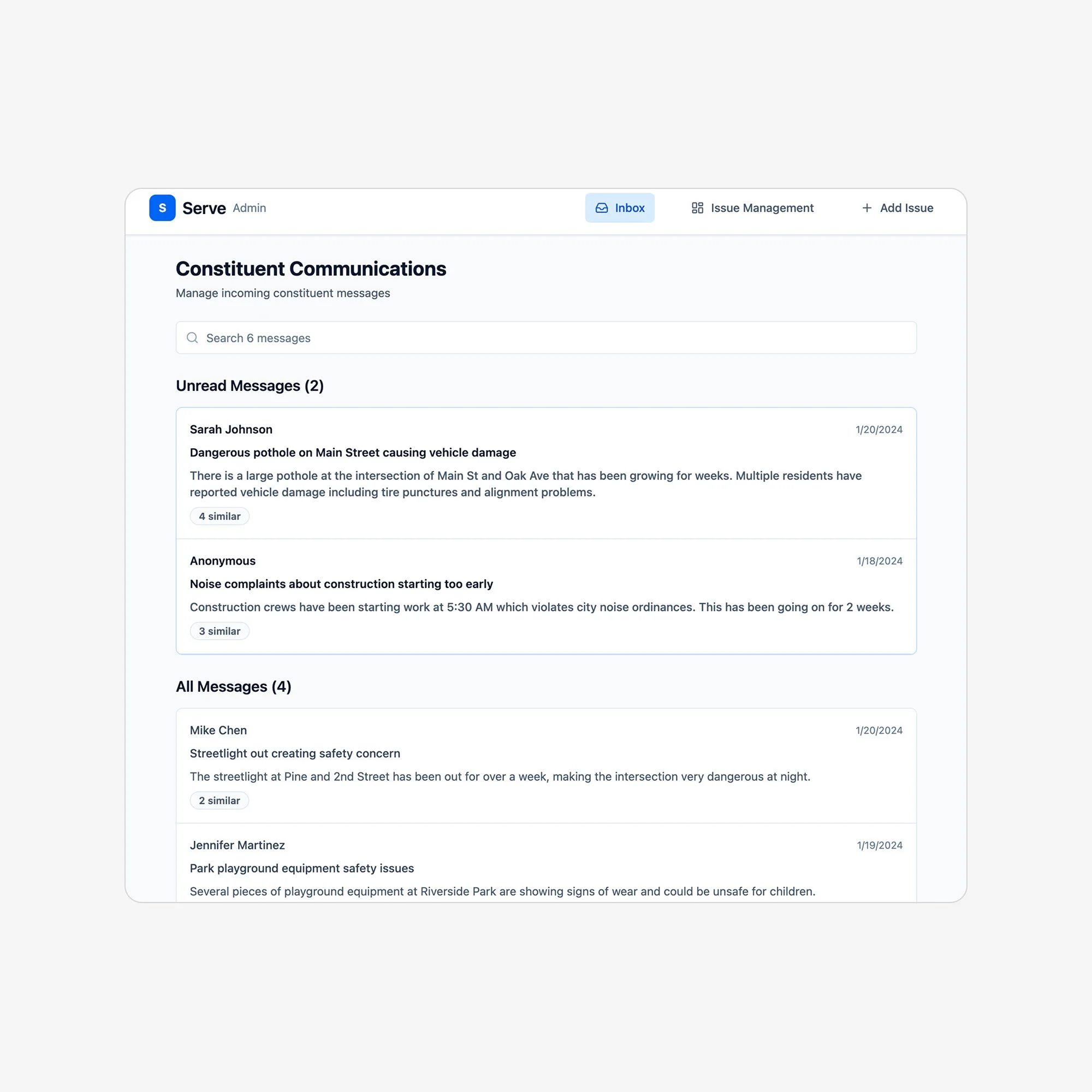

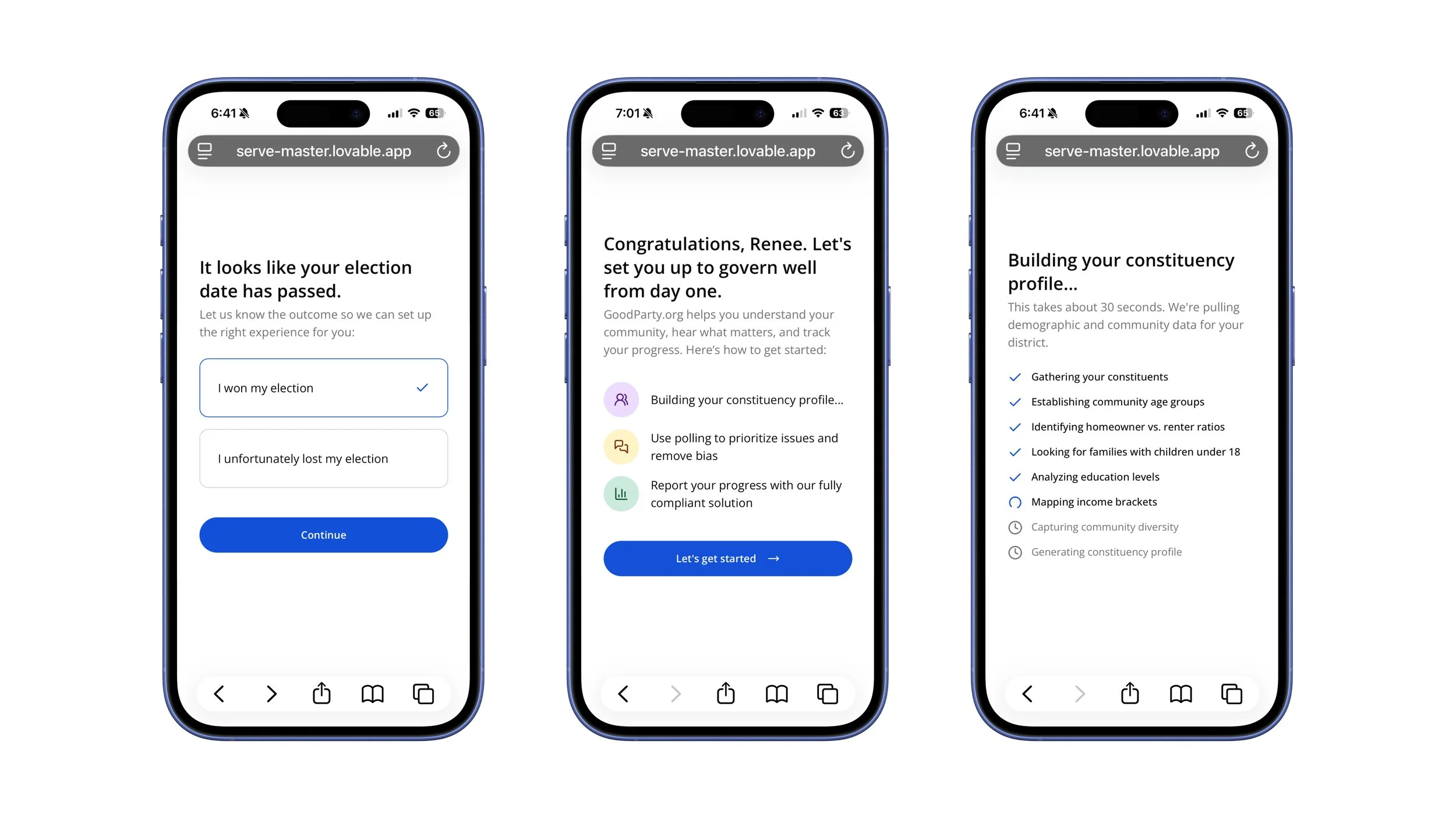

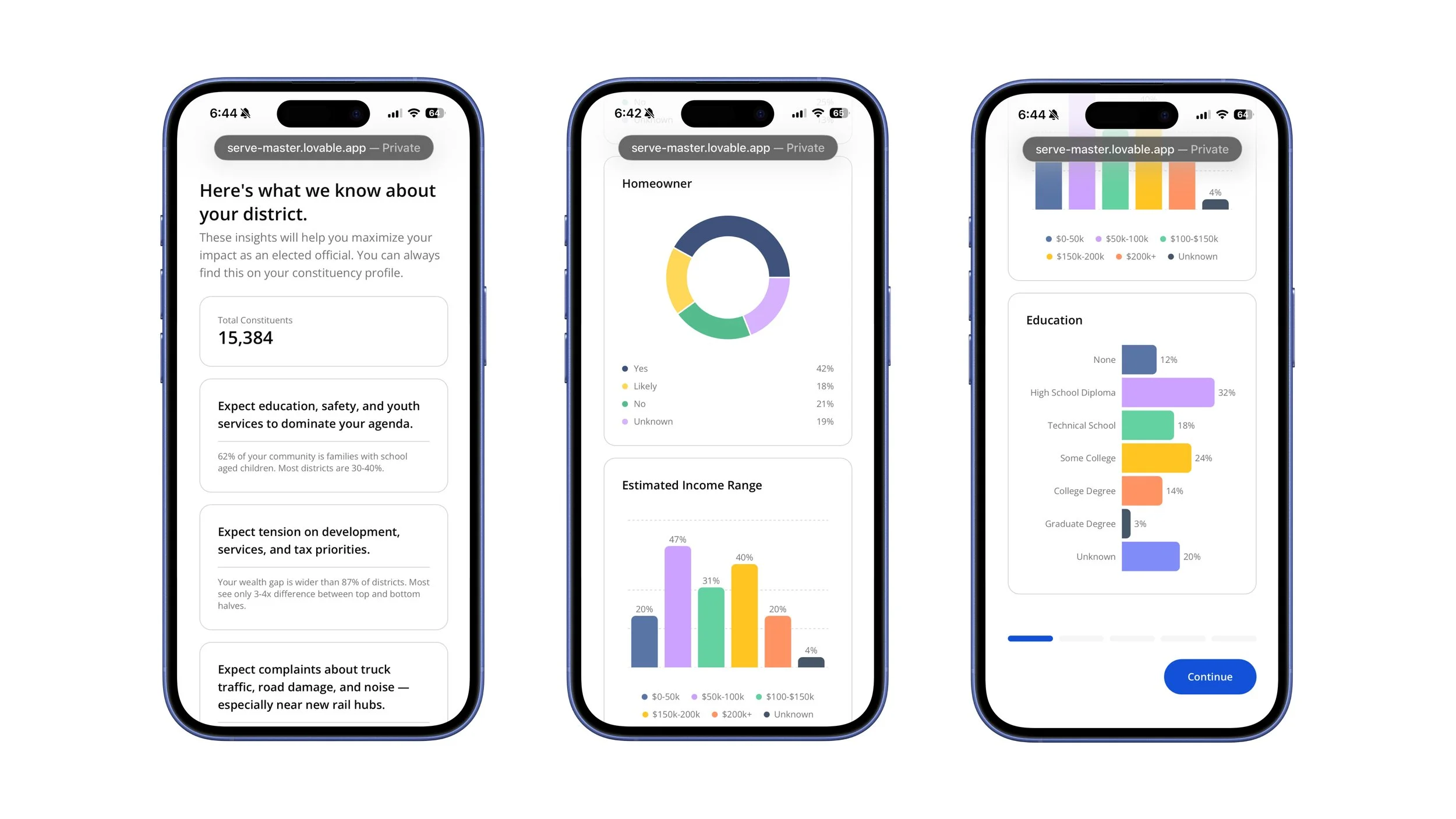

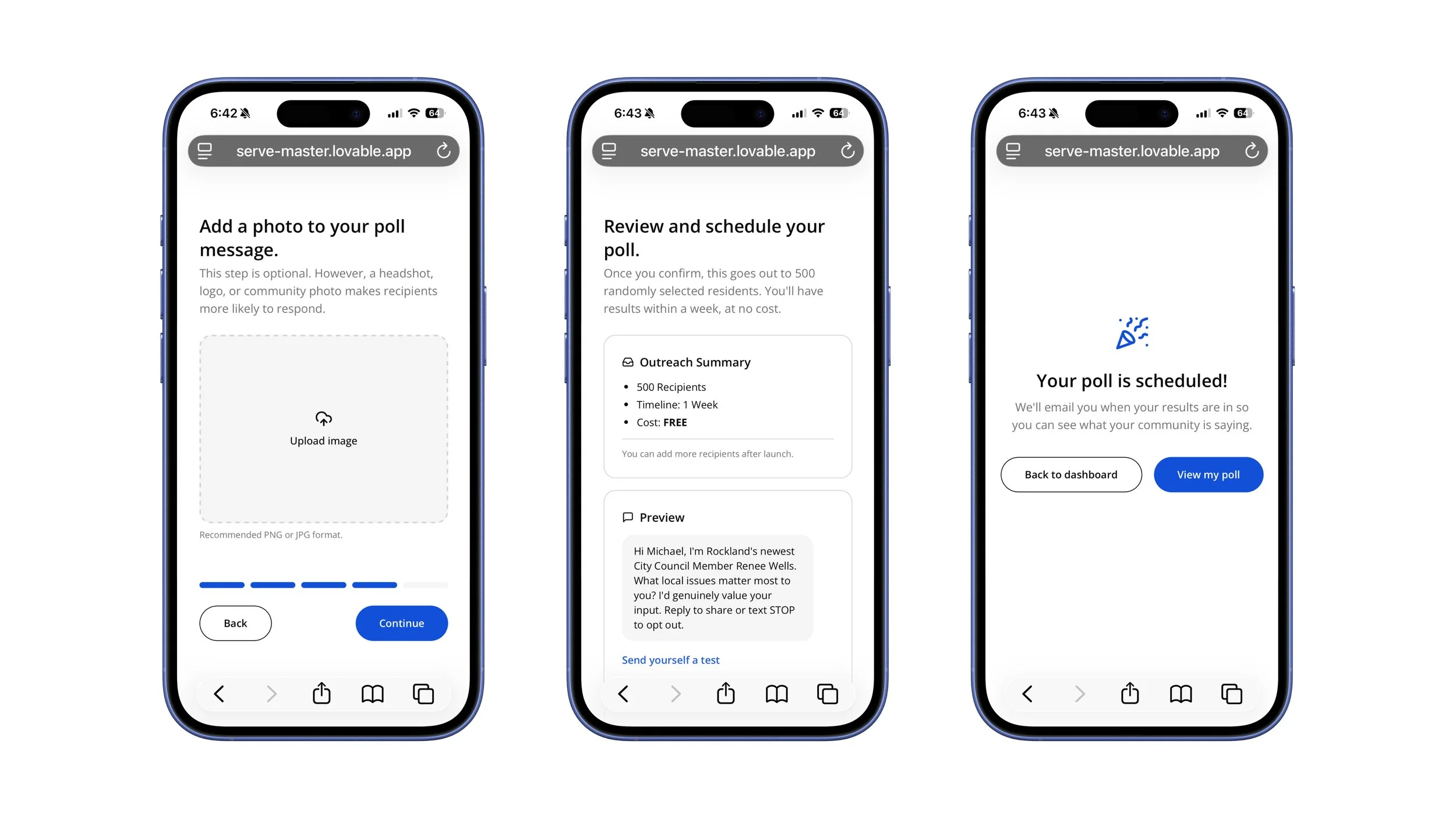

I went through many iterations in Lovable to validate our hypothesis.

You can click each image to take you to some of our early explorations and prototypes that we tested with our panel.

The Tradeoff

In the earliest phases of the product, however, we operated more like a concierge service than automated software—manually scheduling polls, clustering responses, and delivering insights. This approach eliminated risk before scaling by letting us validate that the problem was real, the insights were valuable, and our assumptions still held true—all before committing to complex infrastructure.

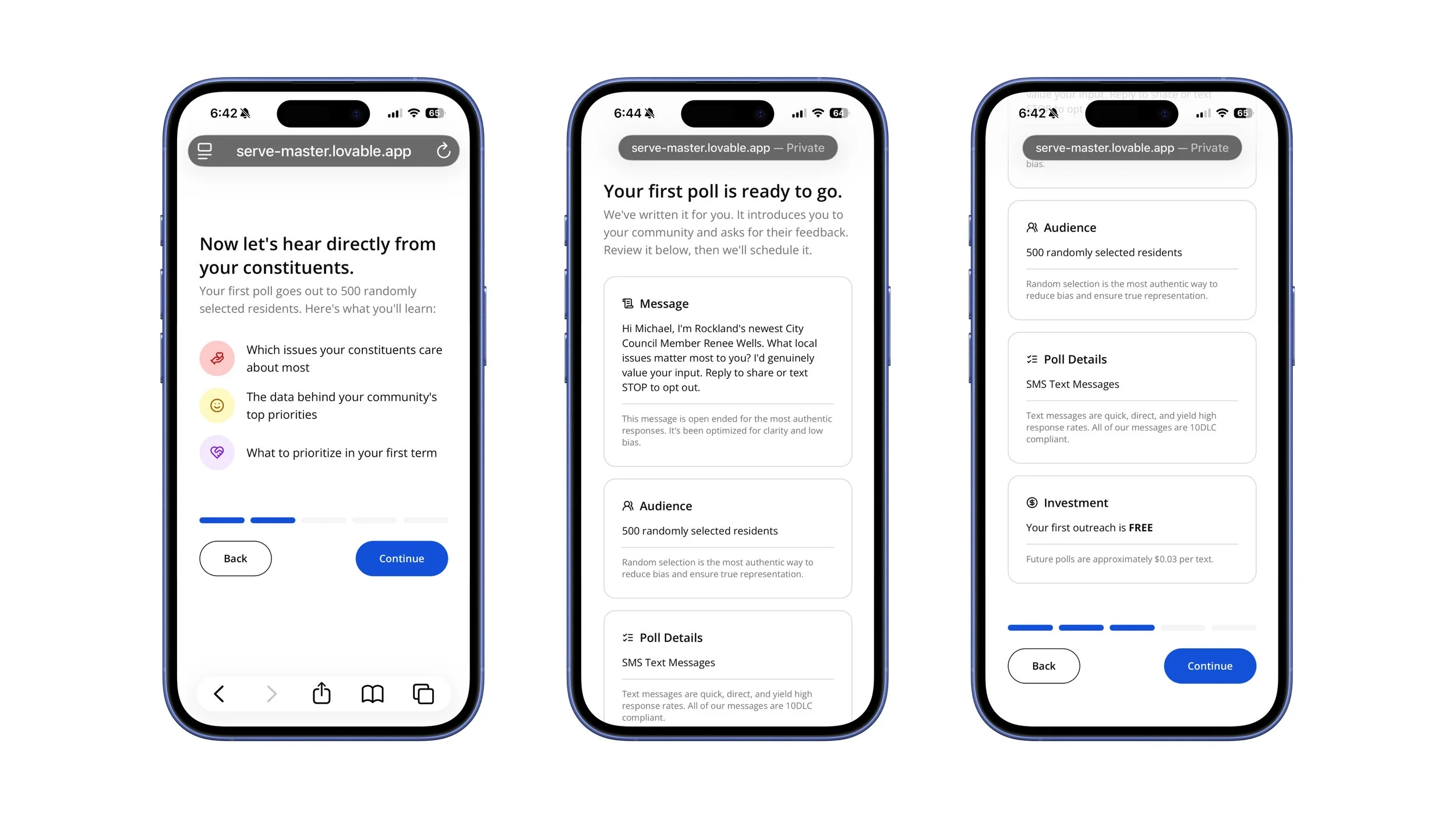

Getting there required embracing constraint. Elected officials couldn't choose their own poll questions, message language was fixed, and customization was intentionally limited. Over-investing too early would have risked optimizing the wrong solution and locking us into the wrong abstractions.

To offset that friction, we offered 500 free SMS messages per official, lowering the barrier to participation.

We continued to balance building and learning across three phases.

Phase 1: Manual service, maximum learning

We targeted newly elected officials who urgently needed constituent insight. Everything was manual:

Sales outreach

SMS scheduling via an external platform

Human-reviewed AI clustering (Claude, Gemini, ChatGPT),

PDF results were delivered over Zoom

This validated core value without premature infrastructure investment.

Phase 2: Manual systems, in-product delivery

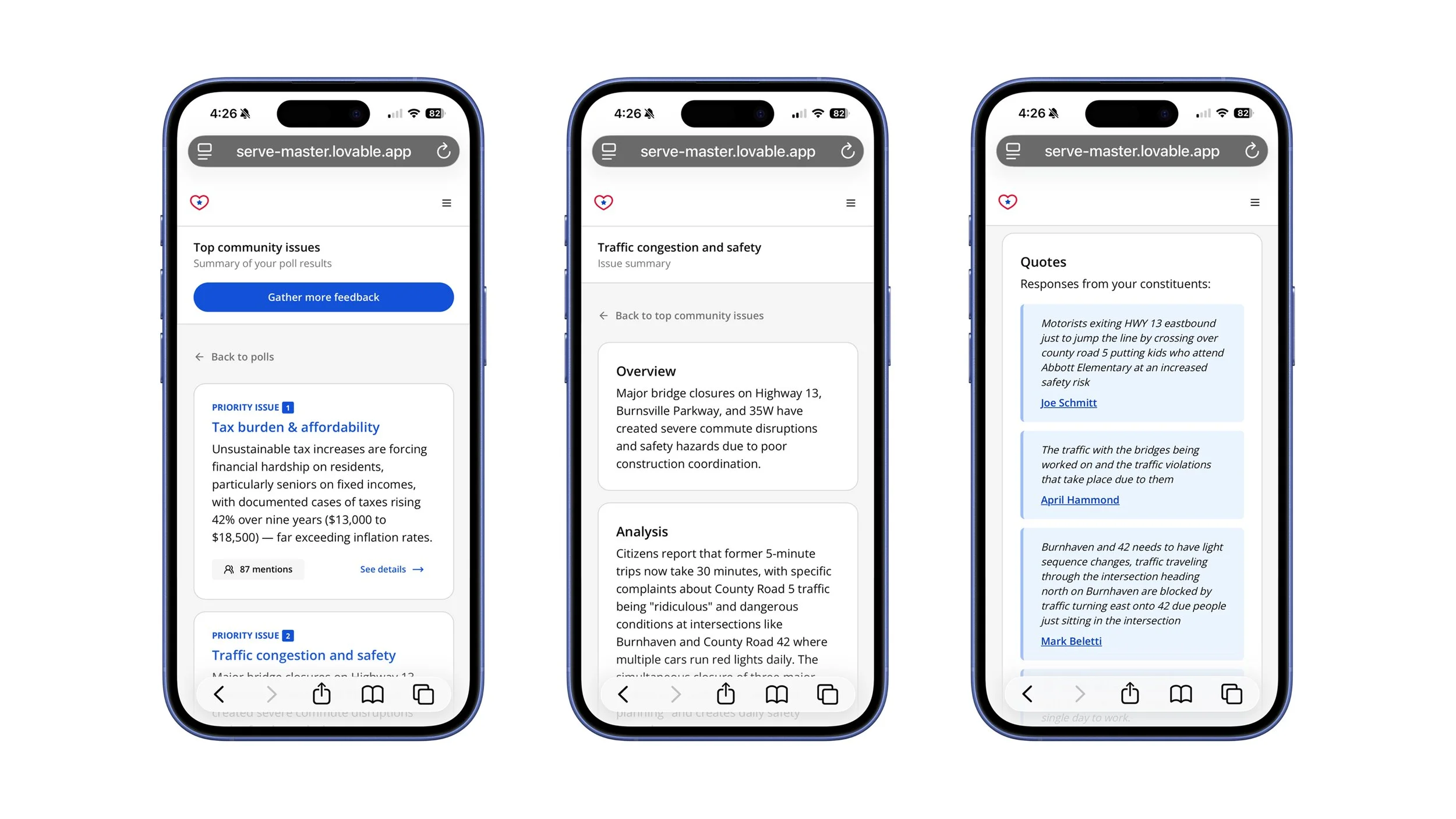

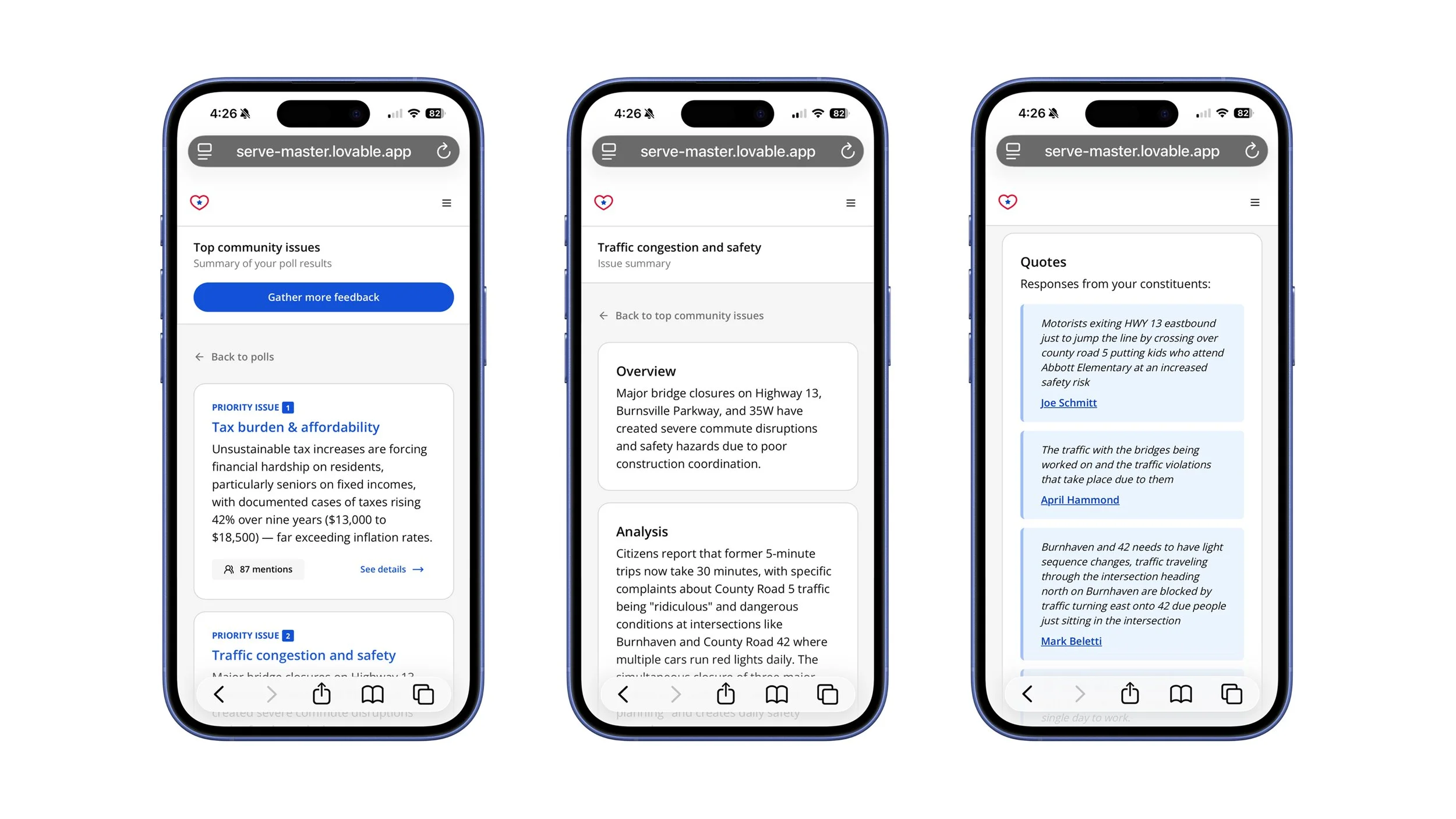

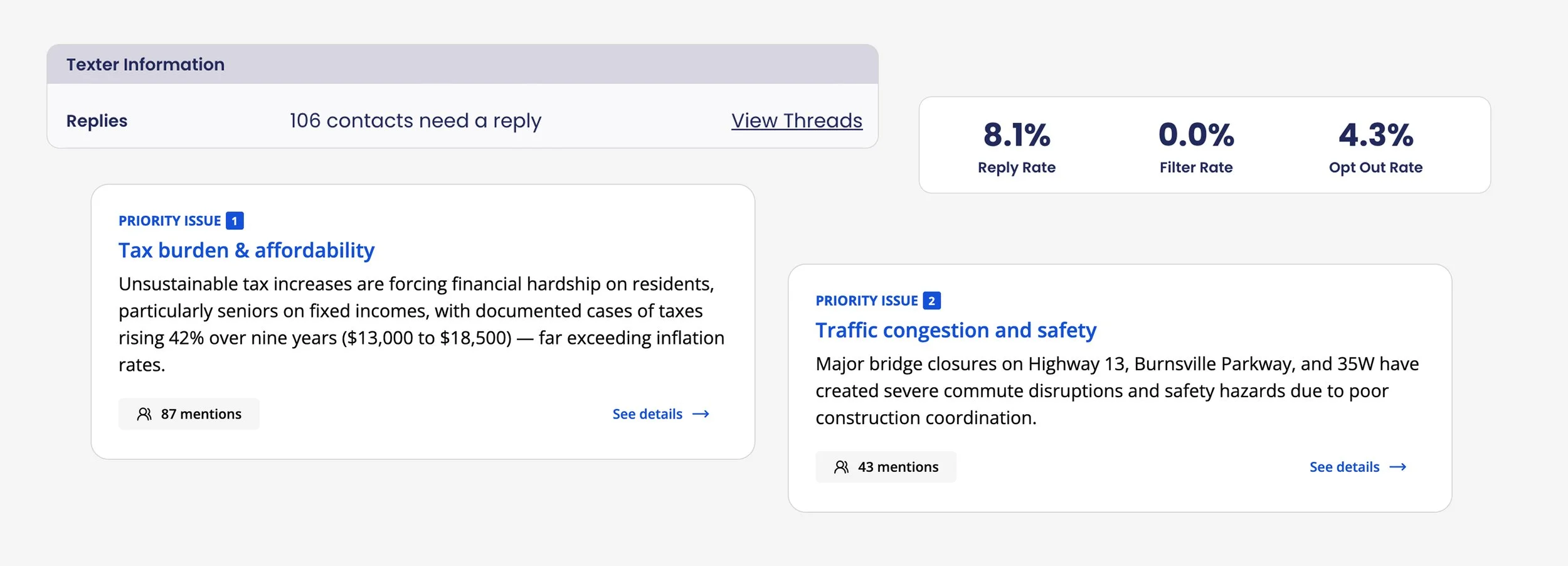

We expanded to a broader set of officials while keeping operations largely manual. The key shift was where results were delivered:

Officials received an automated email/text when the results were ready

One click brought them into the product to view results

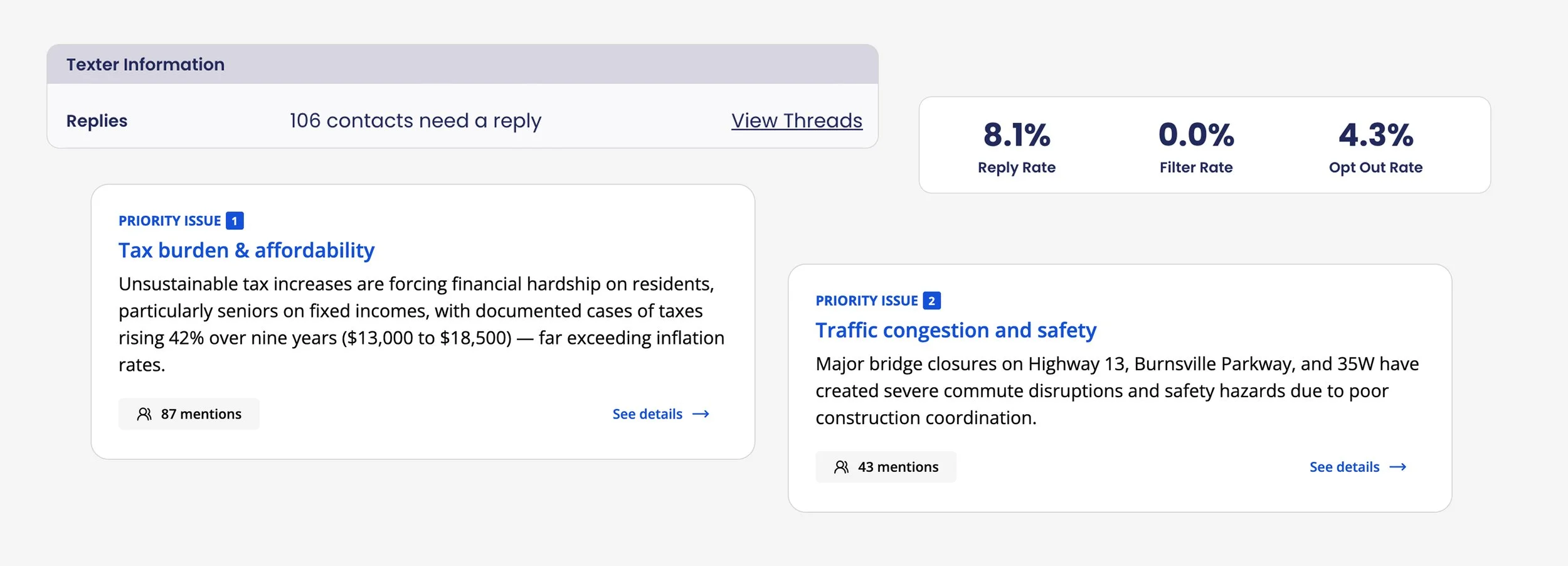

AI-clustered responses with summaries and representative constituent quotes

This phase tested usability, trust, and comprehension of AI-generated clusters while keeping backend complexity low.

Phase 3: Real product, "fake" backend

The experience felt fully real—officials could launch polls directly from the product. The backend remained manual while we continued to validate demand, feature needs, and confirm (through data) if automation was worth the investment.

Phase 3 also introduced custom polls in beta—our #1 feature request in user research calls.

The Unlock

By running the earliest phases manually, and with a free activation poll, we answered critical questions quickly:

Did the results actually provide value?

Did the AI-driven clustering make sense to elected officials?

Where did we need to refine?

What days and times yielded the highest engagement?

What messaging performed best?

How did officials plan to act on the insights they received?

What were they willing to pay to access/expand their results?

How often would they use polling?

Strong signals across these dimensions informed what we chose to build next—and just as importantly, what we intentionally deferred or discarded.

—

After validating demand through Phase 3, we are currently automating core backend systems and are scheduled to launch publicly (to elected officials not in the GoodParty.org system) in July of 2026.

The Solution

You can also access the full prototype on Lovable: Serve Master

To access: Click Rene Wells in the bottom left. Click prototype settings, archives, then click Onboarding v1.

What I'd Do Differently

Cut inbound exploration sooner: We spent critical early weeks exploring inbound submission because officials surfaced it as their core pain. But they were reacting to existing problems, not articulating root needs. I'd set a tighter time box for validation before pivoting.

Advocate harder for a narrower ICP: School boards represent a large percentage of the officials we serve, but their challenges differ significantly from city council members or mayors. I raised this early, but didn't push hard enough. A tighter ICP from the start would have accelerated our learning.

Why this matters

This project reflects how I lead 0→1 work:

Addition is easy. Subtraction takes conviction.

The hard part isn’t building, it’s choosing what to leave out. Serve succeeded because we resisted accumulation: limited customization, manual systems before scale, and a pivot away from inbound tooling when research showed it solved the wrong problem.

That restraint created clarity, trust, and momentum — both for users and the organization.

Most products don’t fail from missing features. They fail from losing focus.