Three Experiments to Move 9.1% Retention — And What We're Learning

Role: Lead Product Designer

Company: GoodParty.org

Year: 2026

Full Prototype: Serve Master

The Problem

First-term officials arrive motivated and leave without coming back

First-term officials engage at 54.5% in month one (higher than incumbents), then retain at just 9.1% in month two. They show up, complete a transaction, and disappear. 100% of returning users came back via team outreach. Zero organic return.

The product gives officials one thing to do and no reason to come back: polling. The onboarding that gets them there is built around a sales rep on the phone, not the product itself. And beneath that, first-term officials face a deeper problem nobody has addressed yet: they don't know how to do the job they just won. An official worried about embarrassing themselves at their first council meeting isn't thinking about polling their constituents.

After leading the 0→1 build of Serve's polling product, I monitored performance and realized we needed to shift focus to retention and activation. These three experiments are bets on what moves that number, and what we're learning in real time.

The Experiments

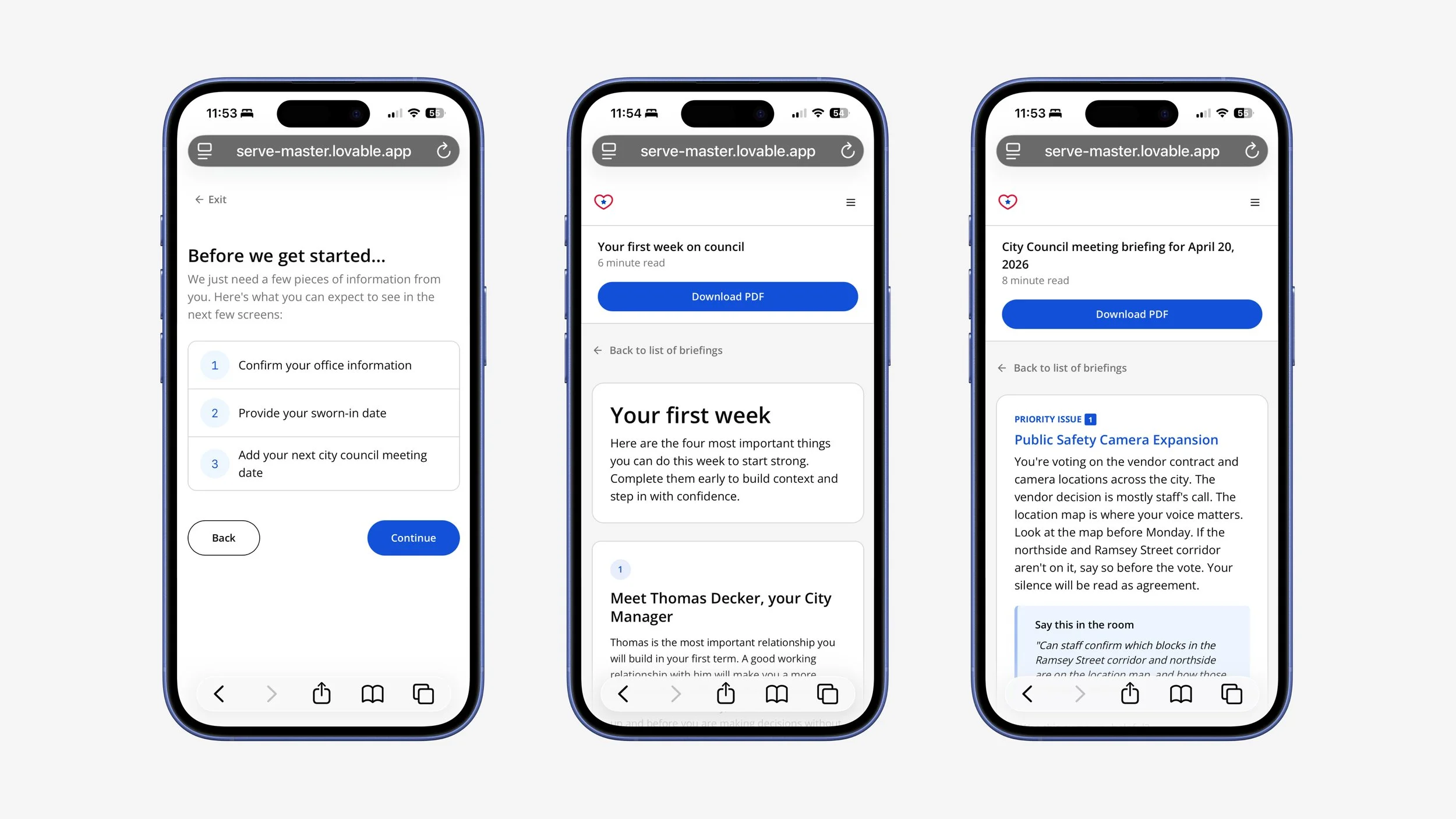

Experiment 1: Onboarding: from sales-led to product-led activation

Overview

Serve's onboarding was never designed to work without a human on the phone.

A rep walks every official through account creation, sends the first poll, and provides all the context the product doesn't, which is why the 91% completion rate is a sales metric, not a product metric. Strip away the rep and the product can't explain what it is, why it matters, or what to do next. As we scale, that motion breaks.

The Hypothesis

By removing onboarding blockers and exposing officials to multiple Serve surfaces early, we expect to increase 7-day engagement with non-polling features beyond the current baseline.

The Solution

We explored many different approaches to the same question: what creates the activation moment for a newly elected official?

Approach A: extend the existing flow with a governance briefing page after poll completion.

Approach B: test whether the data itself is the product

Approach C: test whether connecting constituent data to specific upcoming decisions is what activates, not information alone

I recommended Approach B over incremental optimization for phase 1. Approach A makes a broken funnel slightly better without a rep, but realistically wouldn't solve the problem. Approach B changes what officials experience, which is the only thing that could change retention.

As a first step we are currently separating onboarding and these different briefings so users can focus on one critical task at a time. The next hypothesis: whether ending onboarding on an education moment drives deeper activation than the transaction alone.

View the proposed solution on Lovable.

To access: Click Rene Wells in the bottom left. Click prototype settings, flows, then click Onboarding v2.

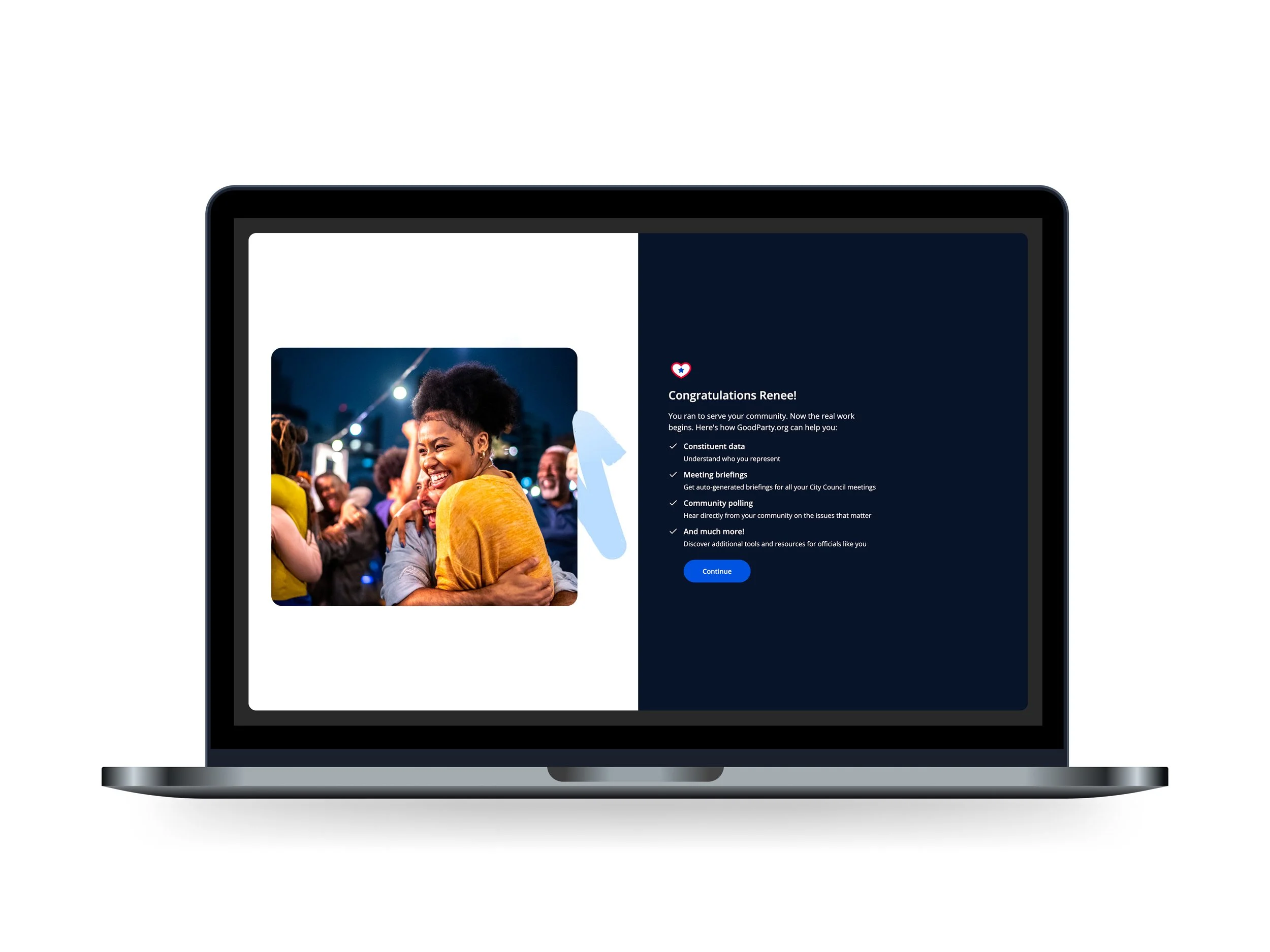

Experiment 2: Governance briefings: bite-sized education as a lifecycle touchpoint

Overview

Early plans called for a comprehensive governance orientation on first login.

User research calls with elected officials however, changed that. Officials often mentioned feeling overwhelmed. They don't need a front-loaded education dump, they need governance education when it's relevant. Before budget season. Before their first public hearing. Before the vote that matters.

The Hypothesis

If we deliver governance education at key lifecycle moments instead of a single onboarding orientation, officials will engage more frequently with governance content and increase usage of Serve over time.

The Solution

Surface governance education at the moments that matter, not all at once.

A briefing on how the budget process works is surfaced early in an official's tenure, before they need to understand what is and is not still influenceable. An overview of government structure is introduced in the first weeks in office, as they begin engaging with real workflows. These governance briefings share infrastructure with our next experiment (meeting briefings), which will allow us to ship both quickly while we validate whether in-product education moves retention.

Explore our early concepts for governance briefings in Lovable.

To access: Click briefings in the side nav.

Experiment 3: Meeting briefings: turning the governance calendar into a return trigger

Overview

Zero organic return means the product has no pull of its own.

Every official who came back did so because someone from the team reached out. Meeting briefings are the bet that the governance calendar is the habit trigger. If Serve can surface constituent data in the context of an upcoming vote, officials have a reason to open it before every council meeting without being nudged.

The Hypothesis

If officials receive a meeting briefing that connects their constituents' priorities to their upcoming agenda, they will return to Serve before meetings on a predictable cadence, establishing the governance calendar as the habit trigger instead of team outreach.

The Solution

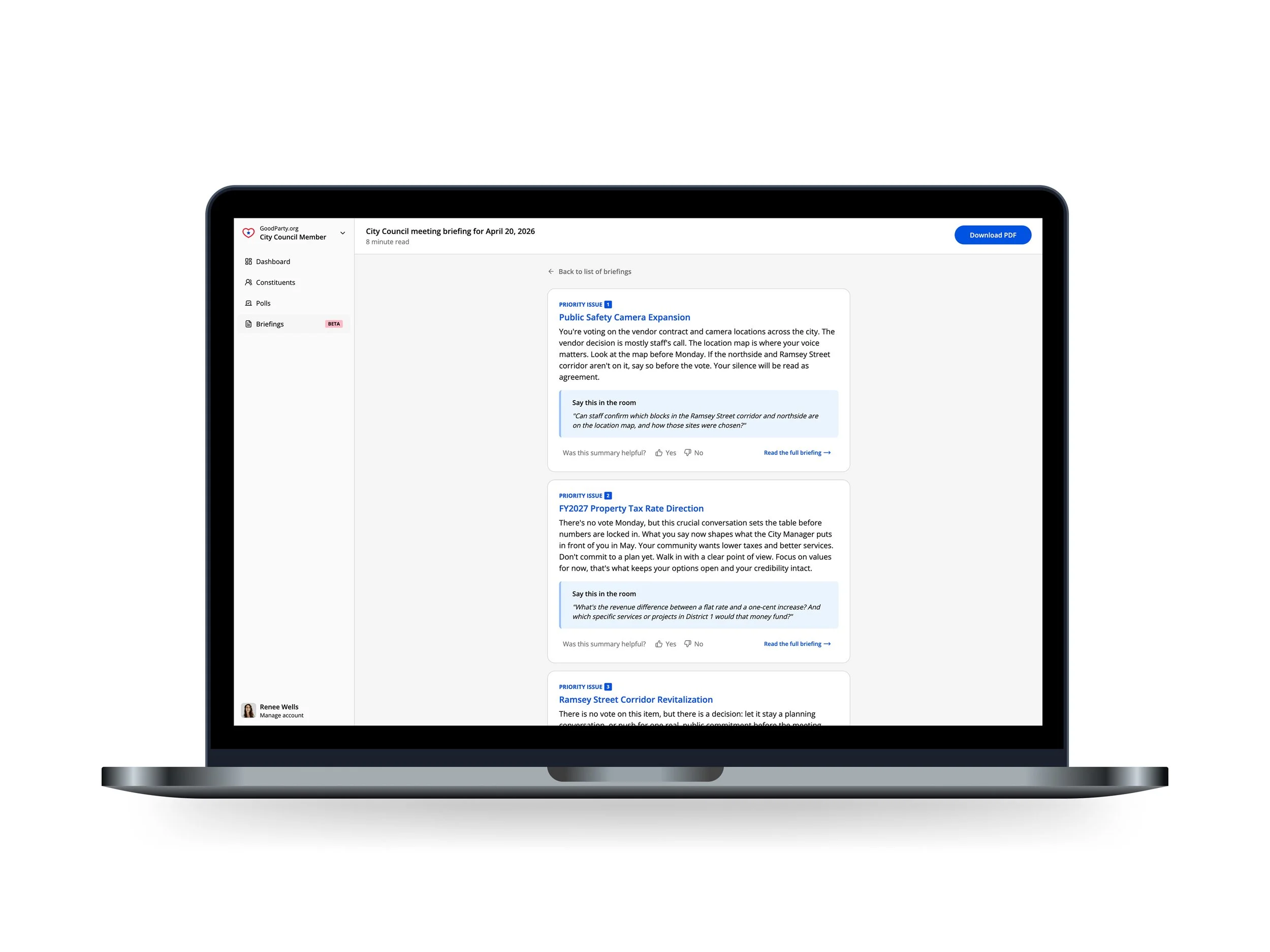

Make every briefing about what the official is being asked to decide, and what that decision means for constituents.

Each priority issue leads with the decision at hand, grounds it in the real-world impact on constituents, layers in constituent sentiment as context, and closes with a clear recommendation and language they can use in the room.

The agenda-first structure was a deliberate choice. Officials don't need more information, they need a point of view before they walk in. The binding constraint is agenda data: municipal agendas publish as few as three business days before a meeting, with inconsistent posting rules across every municipality. Scraping and parsing that data at scale (across thousands of local governments) is one of the hardest technical problems in civic tech.

View the first iteration of our meeting briefing in Lovable.

To access: Click briefings in the side nav and navigate to City Council meeting briefing for April 20, 2026.

Why this matters

There is no playbook for activating a first-term city council member.

These experiments are the playbook, built hypothesis by hypothesis, with explicit criteria to continue or stop, and the discipline to break apart what isn't working before committing to the wrong solution.